Friday, October 5, 2012

Jonathan Taplin On Decoding A Presidential Election, via Twitter

How do you figure out, in real time, what the public thinks of something like Wednesday night's first Presidential debate? For USC's Annenberg Innovation Lab, you tap into the power of Twitter, and create a tool to try to figure out the real time sentiment of Twitter's users--and account for unique things in politics like the frequent use of sarcasm--to try to figure out what people think of the candidates. We caught up with Jonathan Taplin, director of the lab, to better understand how they've been using Twitter to decode the sentiment behind the current election, and also how that technology might be used in other fields.

What is your project about?

Jonathan Taplin: What we're doing is a real time twitter sentiment analytic tool for politics. It's a fairly complicated domain because most people who tweet on politics are snarky. They use a lot of sarcasm, and so a lot of the work has been to refine the sentiment model that in a way to understand sarcasm. A lot of that involves human annotation, something we've done as a collaboration between the USC Annenberg Innovation Lab and another lab at USC, the Viterbi Signals Analysis and Interpretation Lab.

Why did you decide to focus on politics?

Jonathan Taplin: We've looked at a couple of domains. We've done some work in pop culture, specifically in the movie business and stuff like that. Those businesses are fairly straightforward. People tend to say exactly what they mean. If they like a movie, they say why, and they don't usually spend lots of time denigrating a film. But, in the domain of politics, for reasons I'm not quite sure about--maybe something to do with how polarized america is--much of the conversation is very negative and very sarcastic. From a natural language processing point of view, that's a much harder challenge. When we first started this work, I remember a tweet that said "I'm so happy that Michelle Bachman threw her tinfoil hat into the ring!" Needless to say, computers though that was actually a very positive tweet for Michelle Bachman. The notion of words like "tinfoil hat" or "Jim Crow" is something you or I would know, but is not in the lexicon of most computer language applications. So, a lot of what we do is human annotation. First we used Mechanical Turk to do that, and now we're just asking everyone who uses the tool to annotate things they think are incorrect. Slowly, over the process, we're refining the model so that it gets better and better.

What are you hoping to do with all of this?

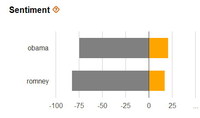

Jonathan Taplin: We got about 10 million tweets last night, and we're really trying to understand who is tweeting, what they are tweeting about, and what does it mean. The postmortem is a much more complicated thing, and our people in the research group are slicing and dicing this data right now, to try to understand different ways to look at it, and looking at all these tweets in lots of different ways. We're trying to figure out if we can use past affiliation and things like hashtags, such as #romney2012, to indicate if they're a partisan for Romney, versus #obama2012. We'd also like to understand the connection between using terms like win or winner and various candidate names. We're just basically trying to decipher the meaning behind all of this.

How accurate is this as a measure of US public opinion?

Jonathan Taplin: Clearly, we want to compare this data to classic polling data, and we don't for a minute pretend this is predictive of the election. We do think that this real time sentiment analytics is new and important, because it acts as a million person focus group. In that sense, it gives us the ability to see how things are trending during the course of the evening, which we think is very important. Beyond that, whether the Twitter demographic represents an overlay of the voting public is not something we're ready to do. There is lots of work to understand, demographically, who they are and how the present themselves. I don't think we're ready for that yet, and that's fine because we're not trying to sell this as a product to anyone. We have the luxury of trying to take a rigorous, academic approach on this.

Can you talk about the joint effort between Annenberg and the USC Engineering school and how that works?

Jonathan Taplin: USC's Annenberg Innovation Lab, although it is associated with the Annenberg School, is very much an interdisciplinary lab. There are as many people working in the lab from Viterbi as from the Annenberg School of Communications. It's that unique combination of social scientists and computer scientists that makes it a unique place on the campus. The computer scientists are interested in the new tasks as way to parse language and understand language, and social scientists need the help of computer scientists, because trying to manually look at 1,300 tweets a second is something we're not going to do. That combination of social science and computer science, we think, is one of the unique things we're doing as a lab.

How well did the system perform last night during the debate?

Jonathan Taplin: We had a few hiccups, as we've never seen that kind of volume before, but I think we learned something about parallel processing. By the next debate, I think we'll have fewer hiccups. I think we're pretty satisfied, and we didn't lose any data. We now have a corpus of 10 million tweets to look at and understand what happened during the evening.

What's the next step for your project?

Jonathan Taplin: We're going to try and think about the whole notion of real time analytics, and how it can be applied to other domains. For instance, the Nielsen ratings for TV essentially try to give you real time analytics on who is watching what show. But, they don't really tell you that much. It only tells you the channel that is turned on. You could be in another room making a sandwich. However, if you're actively tweeting about a TV show, whether that's The Voice or anything else, we know that at that exact moment, you are paying attention. We think that same ability to take a show, minute by minute, just like the Presidential debate, and see the ebb and flow from minute to minute, is the next big challenge. I think if we do it right, this could be really useful to producers or TV shows, to see what the social conversation was. Needless to say, big popular shows like The Voice and American Idol have close to a million people tweeting about it all at the same time, and you can diagnose what is happening.

Thanks!